Client:

Intuit, QuickBooks

Timeline:

Ongoing

Role:

Head of Design (Australia & Rest of World)

Scope:

AU + RoW (200+ countries, 13 websites, 10 languages, 6 currencies), later scaled to US, UK, CA

Focus:

AI-enabled workflow design, design operations, process clarity, team velocity

When I took on the Design Manager role, one of the first things I did was pay close attention to where my team's time was actually going.

What I found wasn't surprising - but it was significant. Two patterns kept surfacing. First, designers were regularly interrupted by partners asking questions that had answers: where's the logo, what's the brand green, how do we brief the team, what's the process for X. The information existed. It just wasn't accessible in the moment people needed it.

Second, the briefs coming into the team were consistently either too thin to act on or too bloated to parse - both versions produced the same outcome: clarification cycles, delayed starts, and frustrated people on both sides.

The instinctive response to these problems would have been more documentation, more training, more process. However I took a different view. These were systems problems and the right response was a systems solution. This is where AI came in - not as a novelty, but as infrastructure.

I initiated, designed, and built both tools end-to-end.

That means identifying the problem space, defining success criteria, designing the information architecture, selecting and configuring the AI platforms, writing and refining the instruction sets, and driving adoption across teams.

I want to be clear about what that actually involved technically. Building reliable AI tools - particularly custom GPT agents - requires genuine understanding of how these systems behave. I wrote detailed instruction sets that defined tone, terminology, scope boundaries, and escalation behaviour. I tested extensively for instruction drift and edge cases. I made deliberate platform choices based on performance, not convenience - more on that below.

The strategic intent throughout was organisational leverage: build once, reduce friction permanently, and free the team to focus on the work that actually requires a designer.

The problem was straightforward once I named it clearly. Partners were defaulting to messaging my team directly because finding information themselves required knowing where to look - and nobody had time to figure that out under pressure.

The result was constant low-level interruption that broke creative flow without adding any value to either side of the conversation.

I started by auditing Slack channels, emails, and ticket history to map the most common questions - essentially doing user research on my own team's pain points. The answers were concentrated in a surprisingly small set of topics: brand fundamentals, logo and asset access, design team remit, and campaign and channel guidance. I consolidated everything into a single structured source of truth, then uploaded it into NotebookLM to create BAM AI - a conversational assistant that could answer these questions accurately, instantly, and without pulling a designer out of their work.

NotebookLM was the right choice here specifically because of its grounding behaviour. Unlike general-purpose AI tools that can hallucinate or drift, NotebookLM responds from the source material you provide.

The adoption was rapid. Within a month, low-tier brand and process questions had dropped to near zero. Partners had a place to go that worked. Designers stopped being the default answer to questions a document could handle.

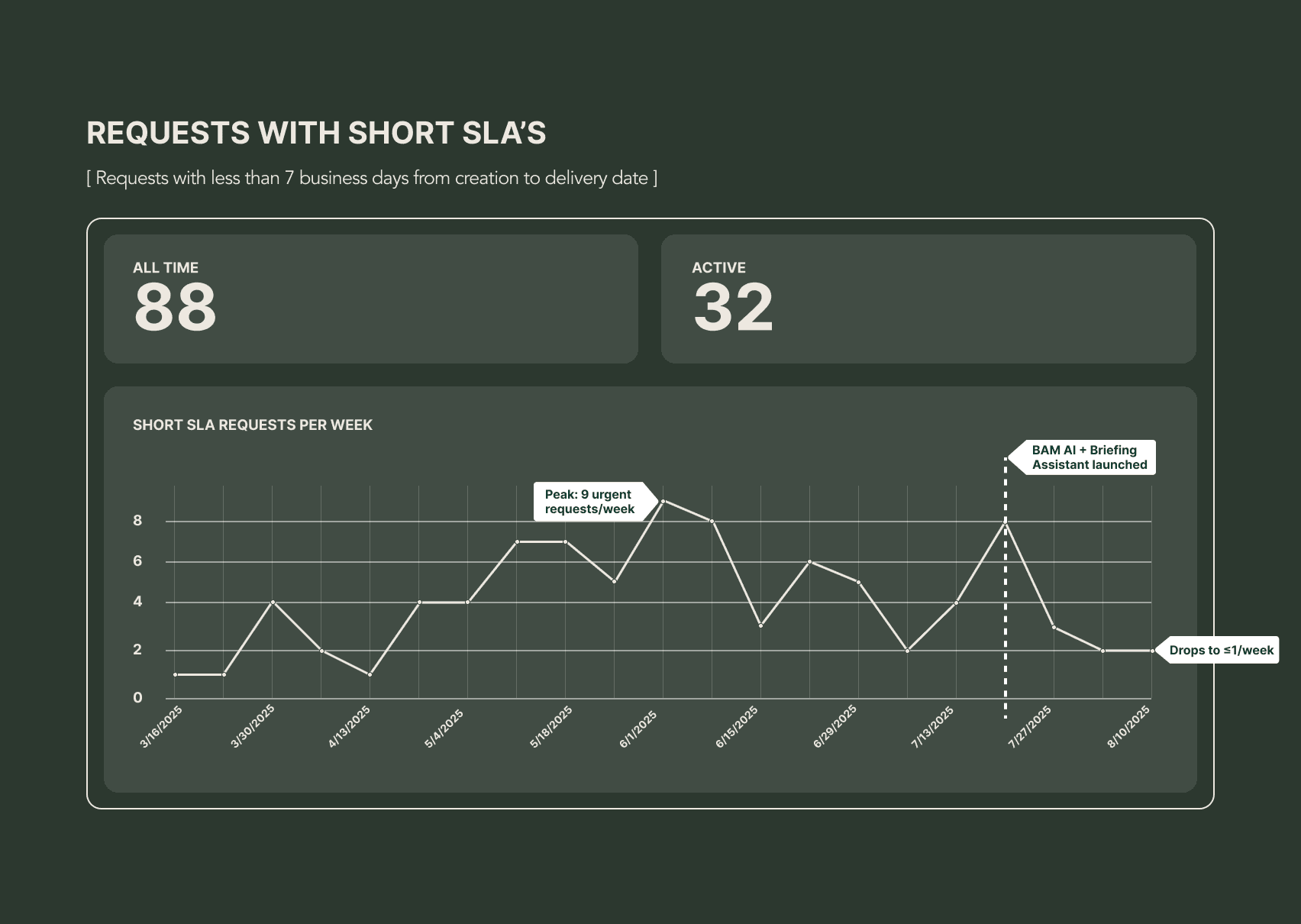

The briefing problem was more complex - and more consequential. Incoming requests were being flagged as urgent at a rate that was making it impossible to plan, resource, or run sprints effectively.

At peak, we were seeing nine urgent-tagged requests in a single week. When I followed up directly with partners, I found that many of these weren't genuinely urgent - teams were submitting with tight timelines to push their projects up the priority queue. The system had no mechanism to distinguish real urgency from strategic urgency, and the design team was absorbing the cost.

The brief quality problem sat upstream of all of it. Briefs that lacked clear objectives, realistic timelines, or relevant context required designers to spend the early phase of every project doing discovery work that partners should have resolved before submission. That's time that doesn't show up as rework in any report, but it compounds quickly across a team.

I designed a custom GPT agent to act as a pre-submission partner for anyone briefing the team. The workflow is simple: a partner uploads their draft brief, and the agent reviews it against a detailed brief standard I wrote - removing irrelevant content, identifying gaps, asking targeted clarifying questions, and outputting a clean structured summary ready for ticket submission. The quality bar is defined by me. The agent enforces it consistently without any designer involvement.

The instruction set was significant work. I used AI to draft an initial version, then refined it line by line - defining terminology, setting scope boundaries, specifying how the agent should handle ambiguity, and calibrating how deeply it probes based on project complexity.

I also tested across platforms. Gemini showed instruction drift under load - responses started deviating from defined behaviour in ways that would have undermined partner trust. I moved the implementation to a custom ChatGPT agent, which gave me the control and reliability the tool needed to work consistently at scale.

The system architecture: scattered sources consolidated into a single source of truth, surfaced through a conversational AI interface, accessible to all teams and partners.

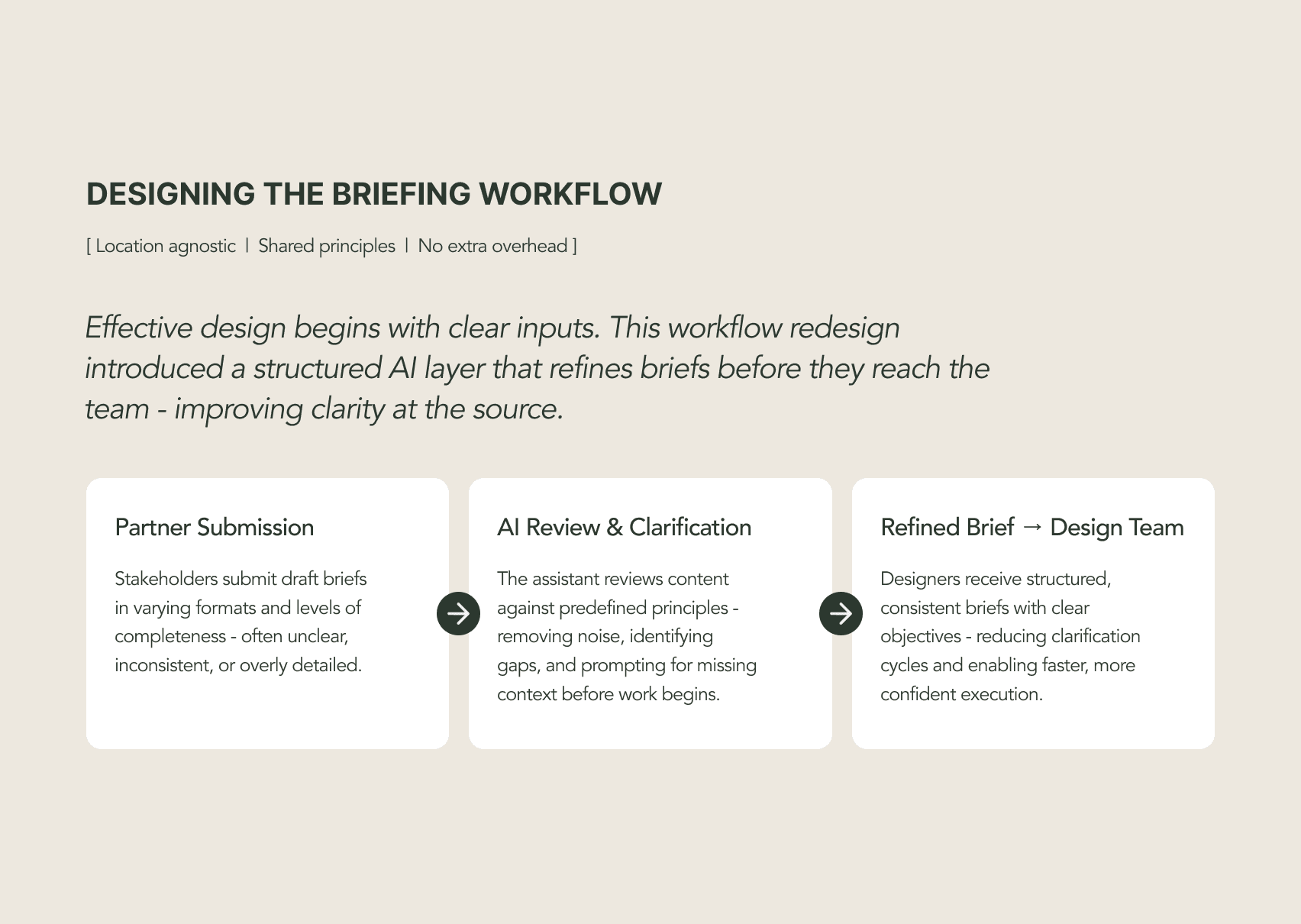

The Briefing Assistant in use. Partners submit briefs directly; the agent reviews, questions, and restructures before anything reaches the design team.

The before and after: from scattered information and interrupt-driven workflows to centralised knowledge and self-serve access.

The three-stage briefing flow: partner submission, AI review and clarification, refined brief delivered to the design team.

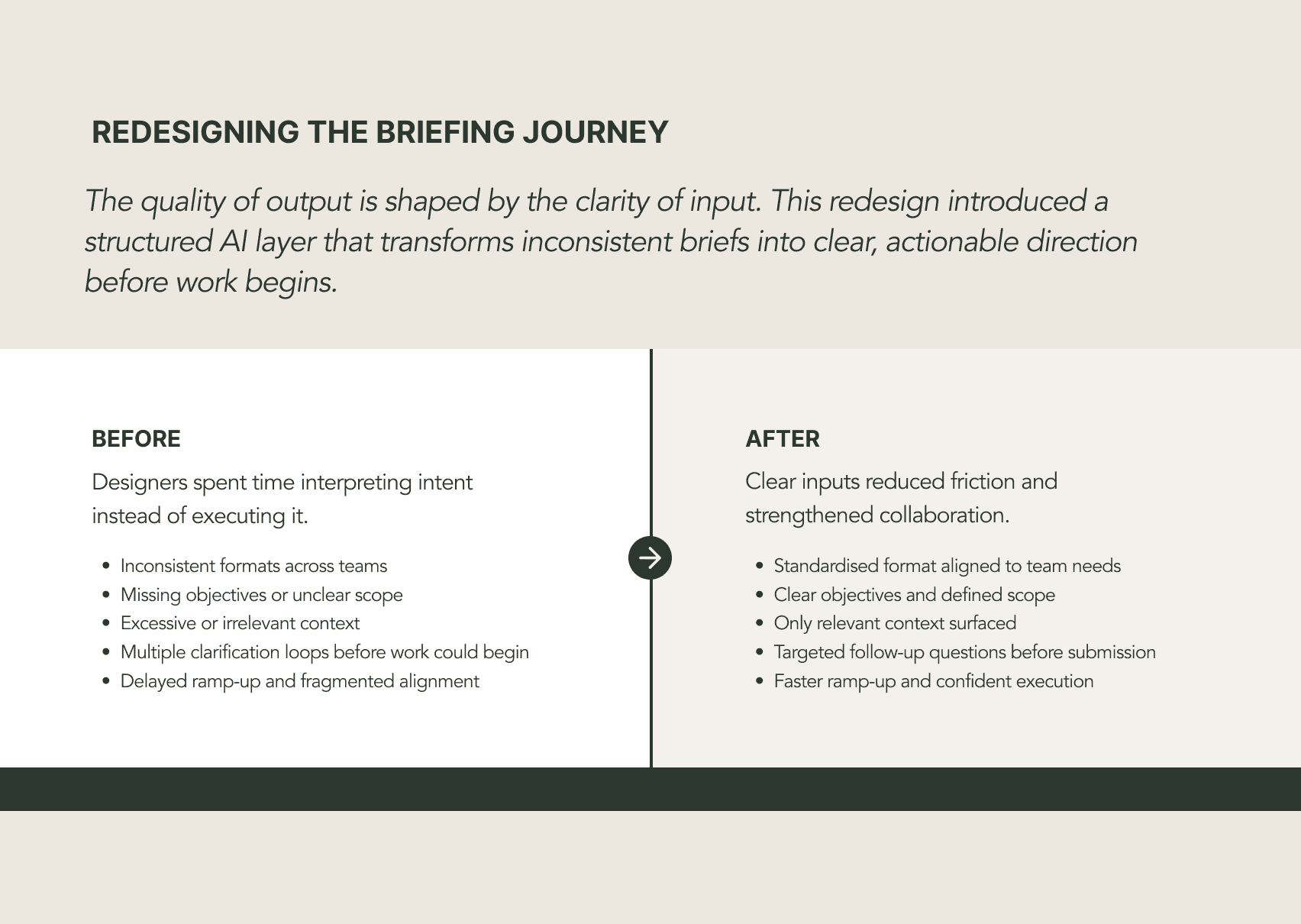

The before and after on brief quality: inconsistent formats, missing objectives, and multiple clarification loops replaced by standardised inputs and confident execution.

The data that made the problem undeniable - and the improvement visible. Urgent-tagged requests peaked at nine in a single week. After the tools launched, that number dropped to one or fewer per week and has held there.

The Briefing Assistant has since been adopted by US, UK, and Canadian teams - built once in APAC, scaled globally without additional overhead or governance.

Urgent-tagged requests dropped from a peak of nine per week to one or fewer - and have stayed there.

Brief quality improved measurably, with designers reporting faster project ramp-up and significantly fewer clarification cycles at the start of engagements. Partners became more self-sufficient, more confident in how to engage the team, and more realistic about timelines when they understood the briefing standard upfront.

The Briefing Assistant has since been adopted by the US, UK, and Canadian teams. A tool built to solve an APAC operations problem turned out to solve a global one - because it was designed around principles, not local process quirks.

The most valuable thing a design leader can do isn't always design. Sometimes it's building the conditions that let design happen well.

Both tools were built on the same belief: that AI is most powerful when it's applied to friction, not novelty.

The technology was the answer to a problem I'd already defined clearly. That order matters.

Design leaders who understand AI at a practical, technical level have an advantage right now - not because AI is a trend worth chasing, but because it opens up a category of solution that wasn't available before. The ability to build tools like these, and to know when and why to build them, is part of what modern design leadership looks like.